If I had a dollar each time I heard someone opine the chasm between their revenue results and sales forecast, I’d be spending twelve weeks every summer relaxing in a tony Montana lodge, fly fishing by day, and gazing at constellations at night. As you’d expect, I’d be unplugged from the grid and practicing yoga. “My invoice? I’ll snail mail it to you . . . Send a check when you can.” Fun to think about, but back to reality . . .

Trying to get sales forecasts to hit actual revenue bang-on is a fool’s errand. There are many reasons, but following years missing the bullseye and searching deep within my soul asking why, I offer the top three:

- Sales forecasts depend on human decisions, which are confounding to predict.

- [Stuff] happens – Check out the definition of Black Swan, and you’ll recognize what I’m talking about.

- Our biases infect forecasts. We want our deals to close, so we overlook risks and inflate our expectations.

“The sales team forecasts $100 million in revenue for the quarter.”

Substitute an appropriate number, and you can probably dig up an email or two you’ve sent or received with similar wording. Here’s where things get weird. Many companies motivate employees with incentives for matching sales results to predictions. Some punish them for being wrong. Some do both.

Let the games begin! Accuracy might not be as valuable as you think. If I have a customer who reliably orders 100 units every month, I can forecast that amount, and – assuming the orders are received – my forecast is accurate as all get out. But a lot of purchasing is just that way: steady, predictable, consistent. In this instance, is my forecast valuable? Not really. Operations already know it’s coming and they have planned production, personnel, and materials accordingly. Other examples are abundant. I’m playing things safe, yet I get rewarded for my “clairvoyance.” Is this a great country, or what?

So, why forecast at all? “Businesses often use forecasts to project what they are going to sell. This allows them to prepare themselves for the future sales in terms of raw material, labor, and other requirements they might have. When done right, this allows a business to keep the customer happy while keeping the costs in check,” according to Arkieva, a company that specializes in supply chain management. To paraphrase, forecasting is a planning tool that helps managers prepare resource “shock absorbers” that balance profit and customer satisfaction.

Insisting on forecast accuracy is easy, which explains why it’s almost universally demanded. Measuring accuracy is a harder task, and delivering accuracy – well, you’d probably have better luck getting a plastic drink straw or disposable fork in downtown Seattle.

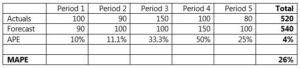

One popular accuracy metric is MAPE, or Mean Absolute Percentage Error. According to the table below, APE, or Absolute Percentage Error, looks relatively tight at 4% after five periods. But at 26%, MAPE suggests a different story. Based on MAPE, it’s hard to describe this example as representative of stellar forecasting.

(source: arkieva.com)

The table also reveals a measurement fallacy. Notice that periods 1 and 2 have the same actual-forecast variance (10), but sandbagging in Period 1 produces a lower APE (10%) than overstating in Period 2 (11.1%). If your reps are rated on forecast accuracy, there’s a clear message: ‘tis better to be under than over. As the saying goes, “be careful what you wish for, because you might get it.” In this case, stock outages, expedited shipments, late deliveries, and rancor in the customer service call center.

I hate the notion of accurate sales forecasting. It’s naïve and wrongheaded. It’s contrived failure, a la Lucy from Peanuts: “Hey Charlie Brown! You kick the football while I hold it!” Einstein would be pleased to know that his definition of insanity continues to thrive.

If I said, “a pretty good forecast is good enough,” people would malign my squishy tolerance. “Oh, you must eat vegan granola and wear Birkenstocks to sales calls.” Au contraire! My acceptance of sales forecast inexactitude comes from a passion for pragmatism – a reaction fueled by both anger and love. Author Edward Abbey wrote, “a writer without passion is like a body without a soul.” I make no apology for being astringent.

Forecast accuracy simply doesn’t align with customer fickleness, cold feet, biases, budget revisions, caveats, strategic flip-flops, tactical changes, reprioritization, intervening events, committees, sub-committees, ad hoc project teams, internal bickering, competing interests, leadership attrition, new hires, new agendas and directives, politics (both national and intra-company), informal decision hierarchies, second-guessing, scuttled venture funding, maxed-out lines of credit, indecision, trigger events, buyouts, divestitures, mergers, competitive Hail Mary’s, and external forces. All, ingrained in corporate decision making. And I haven’t yet introduced buyer ignorance, stupidity, and confusion. “Sales forecast accuracy!” Whoever coined this oxymoron should be taken to the woodshed.

In forecasting, there’s more alchemy than reason. “The manufacturing people divide it by two after I give it to them, so I multiplied it by three before I gave it to them! Then they divided by four, so I multiplied by five. The credibility gap became ridiculous. Nobody uses my forecast anymore. They make their own!” writes Dave Garwood, an internationally recognized expert in supply chain management. If you’re on the forecast accuracy horse, it’s dead. Get off!”

Happy to dismount, sir, because I ran out of liniment a long time ago. Following decades of complaining and handwringing ad nauseum over forecast accuracy, after countless hours in sales meetings dedicated to telling reps that forecast inaccuracy is tantamount to failure, it’s time to take a step back to ask a different question. Instead of asking for forecast accuracy, ask for forecast quality.

Why quality forecasting? Pause for a moment, and take five powerful Ujjayi breaths. When quality, rather than accuracy, becomes the goal, you are liberated to think probabilistically instead of deterministically. You gain freedom to be wrong, and the opportunity to learn. Achieving forecast quality is fundamentally different from achieving accuracy. With forecast quality, forecast-to-actual results don’t have to match. Instead, forecasters strive for the confidence to say, “given the information available to me at the time, I made the best possible prediction.” That’s no easy task. Forecast quality demands keen situational awareness, iterative learning, and continuous improvement. But compared to the don’t-be-wrong-or-I’ll-kick-your-tail ethos surrounding sales forecast accuracy, the discipline that underpins forecast quality offers valuable upgrade for sales organizations.

Forecast quality concentrates on reducing outcome volatility – not eliminating it. Forecast quality accepts the inevitability of variances. The goal is to tighten the deviation between predicted and actual.

I understand why managers insist on accuracy. Who wants to deal with variances, and all their overhead, including staffing buffers, contingent resources, safety stock, and Plans B, C, and D? CXO’s find life is easier when not bogged down with what-if scenarios. Considering worst-case, most-likely, and best-cases for revenue, unit demand, material purchases, plant capacity, transportation logistics, and labor is tough. Yet, we soldier on. As one former boss liked to tell me, “that’s why we pay you the big bucks.”

Eight attributes of quality sales forecasts:

- Intelligent. Quality forecasts result from careful situational analysis and interpretation. They are supported by clean data and appropriate statistics, and not strictly on instinct or intuition.

- Account for intrinsic uncertainty and complexity. Resolute as a sales team might be on making goal, revenue achievement is not deterministic. Quality forecasts involve ranges like worst case, most likely, and best case.

- Include relevant variables and dependencies, and exclude those that are not. Quality forecasts identify which elements predict an outcome – and the relationships between them – so that probabilities can be assigned.

- Future-oriented. Future events can’t be predicted or modeled from historical data alone. Quality forecasts embed emerging or nascent forces into the model.

- Collaborative. Inputs reflect multiple points of view, including forces that the company doesn’t control (e.g. economic, competitive, technological, social).

- Documented. Quality forecasts clearly explain the methodology, inputs, and interpretation of results.

- Transparent. Quality forecasts allow other departments to learn and understand how they are developed.

- Iterative. Quality forecasts are continuously improved, and must be adjusted as conditions change.

Tips for achieving high forecast quality:

- Use forecast variance as a planning tool. Garwood advises that forecasts “should not be punitive as their primary purpose. When forecasts fall outside an acceptable tolerance, root cause analysis must be performed.”

- Involve the company – not just Sales. A quality forecast must include inputs from product engineering, marketing, and supply chain operations.

- Meet regularly to discuss forecast quality and accountability. “The #1 cause of forecasting problems is lack of accountability,” Garwood says. “A company might meet its overall revenue target, while a particular region or channel might be significantly under- or over-forecast. No region wants to be called out as ‘worst’ in forecast quality.”

- Pursue buy-in. Garwood suggests to “start by describing the forecast accuracy problem and how it impacts the manager, not by saying ‘here’s why this change is good for you.’ With forecast accuracy myopia, everyone spends too much time apologizing for not hitting ‘the number’ bang on. Who wouldn’t want to fix that?”

Above all, recognize the difference between good forecast quality and poor quality. That’s more nuanced than forecast accuracy. According to Garwood, “a good quality forecast means the business operates profitably within the planning variance that management has established. If the variance creates significant strategic or tactical risks, either 1) the forecast is poor quality, 2) the business lacks adequate risk capacity or 3) both.”

Comments (1)

The Sandler Research Center just released results of a survey (Leading from the Front in Challenging Times) which found that 50.3% of responded confirmed that their’s sales team’s forecasting ability was NOT accurate or NOT very accurate! The data was collected in Q4 2020. Think about that – half of the respondents who were managers and sales leaders thought that their teams forecasting ability was less that accurate! You can download the report at http://www.sandler.com/research – Disclaimer, I’m a member of the team of the Sandler Research Center.